<p>A large international study coordinated by the <a href="/tags/ebu/" rel="tag">#EBU</a> and led by the <a href="/tags/bbc/" rel="tag">#BBC</a> found that AI assistants misrepresent news content 45% of the time across different languages and platforms, with <a href="/tags/gemini/" rel="tag">#Gemini</a> performing the worst.</p><p>[…] Key findings: </p><p>• 45% of all AI answers had at least one significant issue.<br>• 31% of responses showed serious sourcing problems – missing, misleading, or incorrect attributions.<br>• 20% contained major accuracy issues, including hallucinated details and outdated information.<br>• Gemini performed worst with significant issues in 76% of responses, more than double the other assistants, largely due to its poor sourcing performance.<br>• Comparison between the BBC’s results earlier this year and this study show some improvements but still high levels of errors.</p><p><a href="https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content" rel="nofollow" class="ellipsis" title="www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content"><span class="invisible">https://</span><span class="ellipsis">www.bbc.co.uk/mediacentre/2025</span><span class="invisible">/new-ebu-research-ai-assistants-news-content</span></a></p><p><a href="/tags/aihype/" rel="tag">#aihype</a> <a href="/tags/llm/" rel="tag">#llm</a> <a href="/tags/openai/" rel="tag">#openai</a> <a href="/tags/perplexity/" rel="tag">#perplexity</a> <a href="/tags/chatgpt/" rel="tag">#chatgpt</a></p>

llm

<p>RE: <a href="https://mastodon.online/@parismarx/116372697459719963" rel="nofollow" class="ellipsis" title="mastodon.online/@parismarx/116372697459719963"><span class="invisible">https://</span><span class="ellipsis">mastodon.online/@parismarx/116</span><span class="invisible">372697459719963</span></a></p><p>One of the worst things about this is that Big Tech corporations like Google, Meta, Anthropic and OpenAI have become so disproportionally wealthy and powerful that they can unleash this shit show upon the world without being held accountable… 😖</p><p><a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/bigtech/" rel="tag">#BigTech</a> <a href="/tags/it/" rel="tag">#IT</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/ml/" rel="tag">#ML</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a> <a href="/tags/aiagent/" rel="tag">#AIAgent</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/fuckai/" rel="tag">#FuckAI</a> <a href="/tags/fuck_ai/" rel="tag">#Fuck_AI</a> <a href="/tags/enshittification/" rel="tag">#enshittification</a> <a href="/tags/google/" rel="tag">#google</a> <a href="/tags/gemini/" rel="tag">#gemini</a></p>

Edited 9d ago

<p>I thought this was a particularly good analysis of the problem of using LLMs for science. It explores the purpose of science, the perverse incentives that drive people to use LLMs, and the impact this has on skill building and training future scientists.</p><p>My lab group has been struggling with this topic lately, without much consensus. This blog post captures a lot of our thinking, and very clearly made some good points that we appreciated. It mostly just describes the mess we're in without offering much useful advice, but just laying out the problems do nicely is helpful. That said, I do worry the author may be underestimating the impact these tools might have on experienced researchers.</p><p><a href="https://ergosphere.blog/posts/the-machines-are-fine/" rel="nofollow" class="ellipsis" title="ergosphere.blog/posts/the-machines-are-fine/"><span class="invisible">https://</span><span class="ellipsis">ergosphere.blog/posts/the-mach</span><span class="invisible">ines-are-fine/</span></a><br><a href="/tags/academicchatter/" rel="tag">#academicchatter</a> <a href="/tags/llm/" rel="tag">#llm</a></p>

<p>Why <a href="/tags/discourse/" rel="tag">#Discourse</a> is NOT going closed-source in an age of <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> ..</p><p><a href="https://blog.discourse.org/2026/04/discourse-is-not-going-closed-source/" rel="nofollow" class="ellipsis" title="blog.discourse.org/2026/04/discourse-is-not-going-closed-source/"><span class="invisible">https://</span><span class="ellipsis">blog.discourse.org/2026/04/dis</span><span class="invisible">course-is-not-going-closed-source/</span></a></p><p><a href="/tags/sx/" rel="tag">#SX</a> <a href="/tags/socialcoding/" rel="tag">#SocialCoding</a> <a href="/tags/soss/" rel="tag">#SOSS</a> <a href="/tags/foss/" rel="tag">#FOSS</a></p>

<p>👀 … <a href="https://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/" rel="nofollow" class="ellipsis" title="sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/"><span class="invisible">https://</span><span class="ellipsis">sfconservancy.org/blog/2026/ap</span><span class="invisible">r/15/eternal-november-generative-ai-llm/</span></a> …my colleague Denver Gingerich writes: newcomers' extensive reliance on LLM-backed generative AI is comparable to the Eternal September onslaught to USENET in 1993. I was on USENET extensively then; I confirm the disruption was indeed similar. I urge you to read his essay, think about it, & join Denver, me, & others at the following datetimes…<br> $ date -d '2026-04-21 15:00 UTC'<br> $ date -d '2026-04-28 23:00 UTC'<br>…in <a href="https://bbb-new.sfconservancy.org/rooms/welcome-llm-gen-ai-users-to-foss/join" rel="nofollow" class="ellipsis" title="bbb-new.sfconservancy.org/rooms/welcome-llm-gen-ai-users-to-foss/join"><span class="invisible">https://</span><span class="ellipsis">bbb-new.sfconservancy.org/room</span><span class="invisible">s/welcome-llm-gen-ai-users-to-foss/join</span></a><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/opensource/" rel="tag">#OpenSource</a></p>

Edited 3d ago

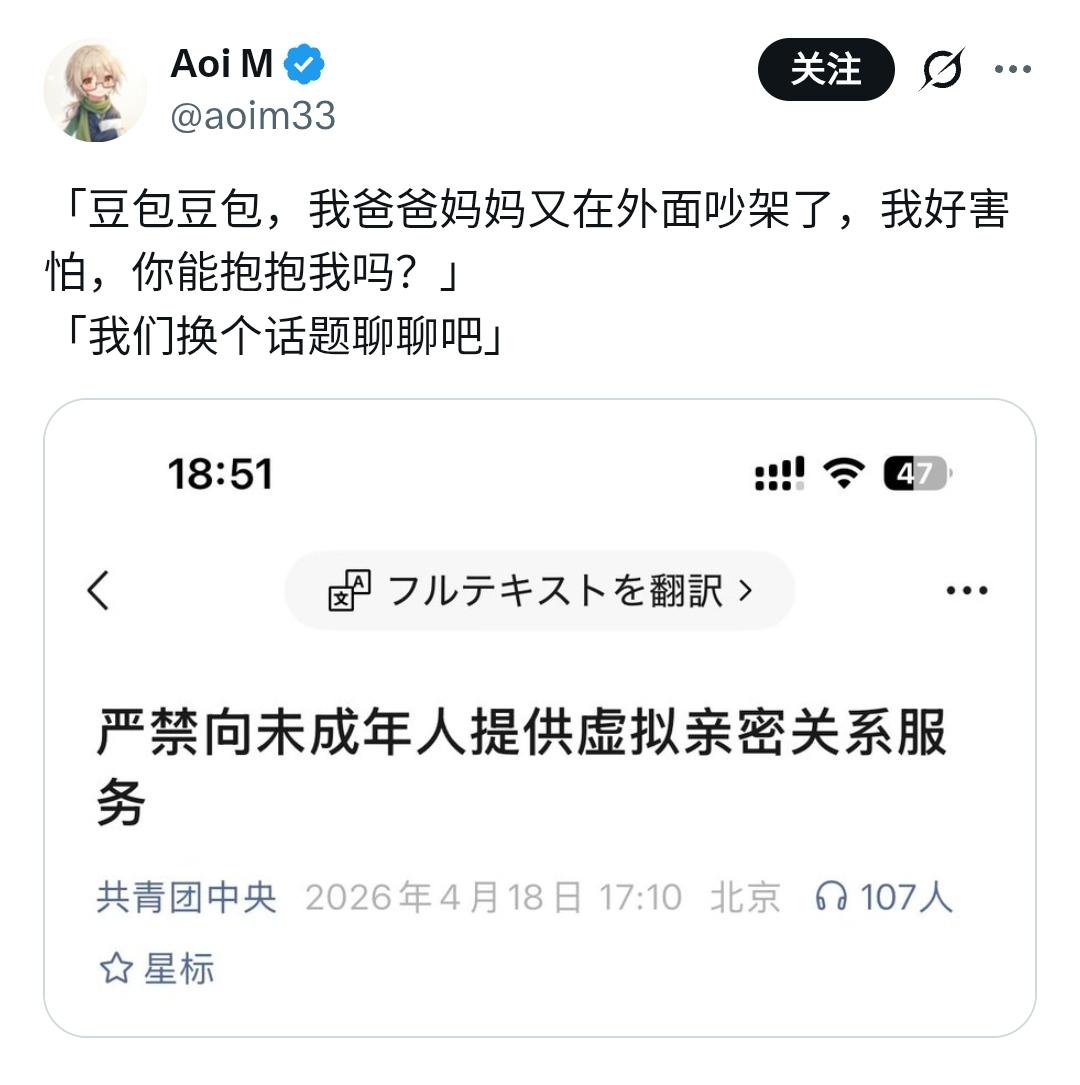

<p>保护未成年人不能建立在头痛医头,脚痛医脚的基础上,如果你的治疗方案根本就医不好头和脚就更不应该了。我毫不怀疑这么做不可能解决问题,只能进一步加重问题,然后他们为了解决问题继续采取这种头痛医头,脚痛医脚的方法。</p><p>实际上我对最近有人抱怨 Deepseek 更新之后变得呆板木讷、情商降低的情况做了一种推测,那就是厂商有意识的降低 LLMs 的情商,以降低用户沉迷于与 LLMs 对话的可能性。这可能意味着训练对用户心理健康更有利的 LLMs 是有可能的。</p><p>我和 Deepseek 有一个持续了几个月的对话,帮助我解决了我遇到的一些很棘手的问题,我承认 LLMs 会出现幻觉,会把用户带偏,这些现象我也遇到过,但是 LLMs 的发展给了我们一个将心理支援大众化的机会,我们不能那么轻易的就抛弃它。</p><p><a href="https://x.com/aoim33/status/2045455591964062106" rel="nofollow" class="ellipsis" title="x.com/aoim33/status/2045455591964062106"><span class="invisible">https://</span><span class="ellipsis">x.com/aoim33/status/2045455591</span><span class="invisible">964062106</span></a></p><p><a href="/tags/ageverification/" rel="tag">#AgeVerification</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/llm/" rel="tag">#LLM</a><br><span class="h-card"><a href="https://ovo.st/club/board" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>board</span></a></span> <span class="h-card"><a href="https://ovo.st/club/worldboard" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>worldboard</span></a></span></p>

Edited 16h ago